Why Enterprise AI Fails (And How to Fix It)

Category: Enterprise AI Publish Order: 1/5

---

Anyone who has tried to deploy AI in a real enterprise has seen the same pattern: the demo works, the pilot looks promising, and production stalls.

That failure is usually blamed on model quality. That is almost always the wrong diagnosis.

Enterprise AI does not fail because the model is weak. It fails because the surrounding platform was never designed for enterprise controls in the first place.

The Enterprise AI Failure Pattern

Week 1: "This is impressive. It answered our questions and summarized internal material well."

Week 3: "Why is it surfacing code and content we did not explicitly approve?"

Week 8: "Security is asking for an audit trail. We only have chat history."

Week 12: "We cannot prove what it accessed, what it executed, or whether policy was enforced."

That is where most enterprise AI efforts break. Not at the model layer. At the control layer.

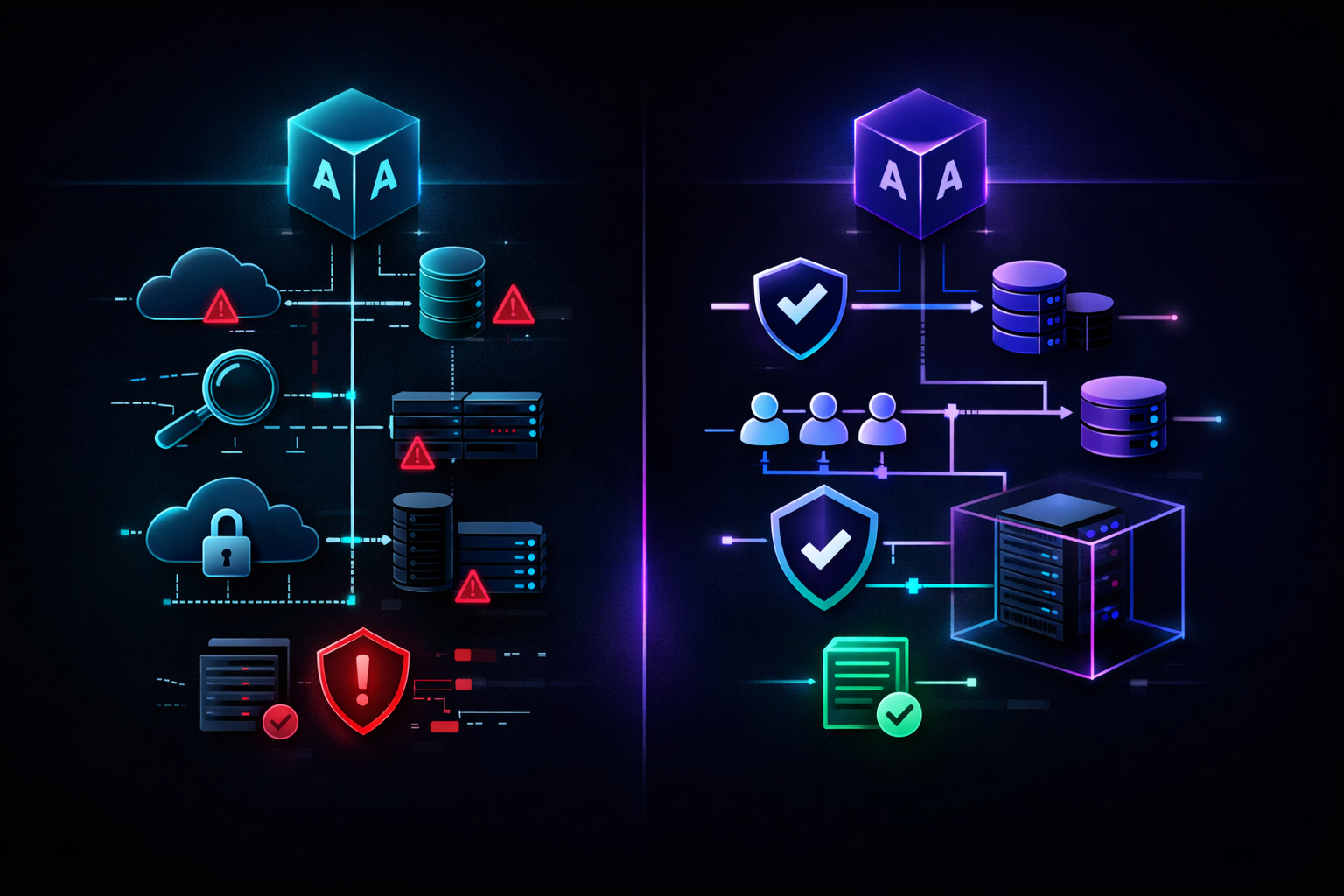

What Actually Fails

1. Audit Trails Are Optional

Most AI platforms log prompts and responses. That is not auditability.

Enterprise teams need to know what data was accessed, what tools were invoked, what policy checks ran, and what happened to the result. A chat transcript is not a compliance artifact.

The fix: append-only audit logs with cryptographic hash chaining. Every event linked to the previous event so you can verify integrity instead of trusting screenshots and platform claims.

2. Access Control Is a Label, Not a Policy

A lot of vendors say they support roles. What they mean is admin and user.

That is not enterprise access control. Enterprise environments need policy-driven RBAC that maps to real collections, real tools, real users, and real network boundaries.

The fix: enforce policy at execution time, not just in the UI. The system should decide whether a user can access a collection, invoke a tool, or run an action before the AI does it.

3. Tool Execution Is Unconstrained

The biggest hidden gap in enterprise AI is not what the model can read. It is what the model can do.

If an AI assistant can run shell commands, Git operations, or internal APIs without a deny-by-default gateway, then the platform is not enterprise-ready.

The fix: allowlist-based tool execution behind an explicit gateway. If a tool is not approved, it does not run.

4. Air-Gap Is Treated Like an Edge Case

For regulated industries, defense, and sensitive internal environments, internet access is not a default assumption. It is a disqualifier.

If the platform depends on external telemetry, license checks, model APIs, or update services, then it is not deployable in the environments that care most about governance.

The fix: fully on-prem or private-cloud deployment with no required external dependencies.

What Enterprise AI Actually Requires

Enterprise AI is not "cloud AI with extra controls bolted on later."

It means building around constraints from day one:

- Provable audit, not just logs

- Policy-enforced access, not just roles

- Controlled execution, not just prompt safety

- Deployment where internet access may not exist

- Predictable governance boundaries for security and compliance teams

That is the difference between a demo and a platform.

The Better Way to Evaluate AI Platforms

Stop asking only whether the model is accurate.

Ask:

- Can you prove what happened, step by step?

- Can you enforce access at execution time?

- Can you restrict tools with deny-by-default policy?

- Can you deploy in a private or air-gapped environment?

If the answer is no, the AI project does not have an enterprise path. It has a demo path.

Final Point

Most enterprise AI failures are not model failures.

They are architecture failures, governance failures, and platform failures.

That is exactly why the market is moving away from generic AI wrappers and toward private enterprise AI platforms that are built for auditability, policy enforcement, and real deployment constraints from the start.

---